It is a sad truth that any health crisis will spawn its own pandemic of misinformation.

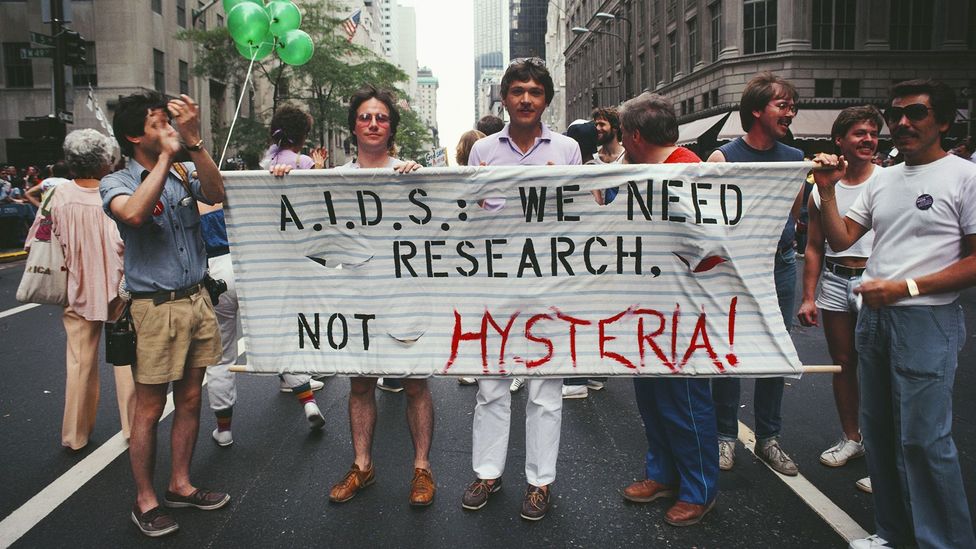

In the 80s, 90s, and 2000s we saw the spread of dangerous lies about Aids – from the belief that the HIV virus was created by a government laboratory to the idea that the HIV tests were unreliable, and even the spectacularly unfounded theory that it could be treated with goat’s milk. These claims increased risky behaviour and exacerbated the crisis.

Now, we are seeing a fresh inundation of fake news – this time around

the coronavirus pandemic. From Facebook to WhatsApp, frequently shared

misinformation include everything from what caused the outbreak to how you can prevent becoming ill.

In past decades, dangerous lies spread about Aids which exacerbated the crisis (Credit: Getty Images)

We’ve debunked several claims here on BBC Future, including misinformation around how sunshine, warm weather and drinking water can affect the coronavirus. The BBC’s Reality Check team is also checking popular coronavirus claims, and the World Health Organization is keeping a myth-busting page regularly updated too.

You might also like:

- What is Covid-19’s real death rate?

- How fear of the coronavirus warps our minds

- What Covid-19 means for the environment

At worst, the ideas themselves are harmful – a recent report from one province in Iran found that more people had died from drinking industrial-strength alcohol,

based on a false claim that it could protect you from Covid-19, than

from the virus itself. But even seemingly innocuous ideas could lure you

and others into a false sense of security, discouraging you from

adhering to government guidelines, and eroding trust in health officials

and organisations.

There’s evidence these ideas are sticking. One poll by YouGov and the Economist in March 2020 found 13% of Americans believed the Covid-19 crisis was a hoax,

for example, while a whopping 49% believed the epidemic might be

man-made. And while you might hope that greater brainpower or education

would help us to tell fact from fiction, it is easy to find examples of

many educated people falling for this false information.

Just consider the writer Kelly Brogan, a prominent Covid-19 conspiracy theorist;

she has a degree from the Massachusetts Institute of Technology and

studied psychiatry at Cornell University. Yet she has shunned clear

evidence of the virus’s danger in countries like China and Italy. She

even went as far as to question the basic tenets of germ theory itself while endorsing pseudoscientific ideas.

Even

some world leaders – who you would hope to have greater discernment

when it comes to unfounded rumours – have been guilty of spreading inaccurate information about the risk of the outbreak and promoting unproven remedies that may do more harm than good, leading Twitter and Facebook to take the unprecedented step of removing their posts.

Fortunately, psychologists are already studying this phenomenon. And

what they find might suggest new ways to protect ourselves from lies and

help stem the spread of this misinformation and foolish behaviour.

Information overload

Part of the problem arises from the nature of the messages themselves.

We are bombarded with information all day, every day, and we

therefore often rely on our intuition to decide whether something is

accurate. As BBC Future has described in the past, purveyors of fake

news can make their message feel “truthy” through a few simple tricks,

which discourages us from applying our critical thinking skills – such

as checking the veracity of its source. As the authors of one paper put

it: “When thoughts flow smoothly, people nod along.”

Eryn Newman at Australian National University, for instance, has shown that the simple presence of an image alongside a statement increases our trust in its accuracy

– even if it is only tangentially related to the claim. A generic image

of a virus accompanying some claim about a new treatment, say, may

offer no proof of the statement itself, but it helps us visualise the

general scenario. We take that “processing fluency” as a sign that the

claim is true.

The mere presence of an image alongside a statement increases our trust in its accuracy (Credit: Getty Images)

For

similar reasons, misinformation will include descriptive language or

vivid personal stories. It will also feature just enough familiar facts

or figures – such as mentioning the name of a recognised medical body –

to make the lie within feel convincing, allowing it to tether itself to

our previous knowledge.

The

more often we see something in our news feed, the more likely we are to

think that it’s true – even if we were originally sceptical

Even the simple repetition of a statement

– whether the same text, or over multiple messages – can increase the

“truthiness” by increasing feelings of familiarity, which we mistake for

factual accuracy. So, the more often we see something in our news feed,

the more likely we are to think that it’s true – even if we were

originally sceptical.

Sharing before thinking

These tricks have long been known by propagandists and peddlers of misinformation,

but today’s social media may exaggerate our gullible tendencies. Recent

evidence shows that many people reflexively share content without even

thinking about its accuracy.

In one study, only about 25% of participants said the fake news was true– but 35% said they would share the headline

Gordon

Pennycook, a leading researcher into the psychology of misinformation

at the University of Regina, Canada, asked participants to consider a

mixture of true and false headlines about the coronavirus outbreak. When

they were specifically asked to judge the accuracy of the statements,

the participants said the fake news was true about 25% of time. When

they were simply asked whether they would share the headline, however, around 35% said they would pass on the fake news – 10% more.

“It suggests people were sharing material that they could have known

was false, if they had thought about it more directly,” Pennycook says.

(Like much of the cutting-edge research on Covid-19, this research has

not yet been peer-reviewed, but a pre-print has been uploaded to the Psyarxiv website.)

Perhaps their brains were engaged in wondering whether a statement

would get likes and retweets rather than considering its accuracy.

“Social media doesn’t incentivise truth,” Pennycook says. “What it

incentivises is engagement.”

Research suggests that some people share material they would know was

false if they thought about it more directly (Credit: Getty Images)

Or

perhaps they thought they could shift responsibility on to others to

judge: many people have been sharing false information with a sort of

disclaimer at the top, saying something like “I don’t know if this is

true, but…”. They may think that if there’s any truth to the

information, it could be helpful to friends and followers, and if it

isn’t true, it’s harmless – so the impetus is to share it, not realising

that sharing causes harm too.

Whether it’s promises of a homemade remedy or claims about some kind

of dark government cover-up, the promise of eliciting a strong response

in their followers distracts people from the obvious question.

This question should be, of course: is it true?

Override reactions

Classic psychological research shows that some people are naturally

better at overriding their reflexive responses than others. This finding

may help us understand why some people are more susceptible to fake

news than others.

Researchers like Pennycook use a tool called the “cognitive

reflection test” or CRT to measure this tendency. To understand how it

works, consider the following question:

- Emily’s father has three daughters. The first two are named April and May. What is the third daughter’s name?

Did you answer June? That’s the intuitive answer that many people give – but the correct answer is, of course, Emily.

To come to that solution, you need to pause and override that initial

gut response. For this reason, CRT questions are not so much a test of

raw intelligence, as a test of someone’s tendency to employ their intelligence

by thinking things through in a deliberative, analytical fashion,

rather than going with your initial intuitions. The people who don’t do

this are often called “cognitive misers” by psychologists, since they

may be in possession of substantial mental reserves, but they don’t

“spend” them.

Cognitive miserliness renders us susceptible to many cognitive biases, and it also seems to change the way we consume information (and misinformation).

When

it came to the coronavirus statements, for instance, Pennycook found

that people who scored badly on the CRT were less discerning in the

statements that they believed and were willing to share.

Matthew Stanley, at Duke University in Durham, North Carolina, has reported a similar pattern in people’s susceptibility to the coronavirus hoax theories. Remember that around 13% of US citizens believed this theory, which could potentially discourage hygiene and social distancing. “Thirteen percent seems like plenty to make this [virus] go around very quickly,” Stanley says.

Testing participants soon after the original YouGov/Economist poll

was conducted, he found that people who scored worse on the CRT were

significantly more susceptible to these flawed arguments.

These cognitive misers were also less likely to report having changed

their behaviour to stop the disease from spreading – such as

handwashing and social distancing.

Stop the spread

Knowing that many people – even the intelligent and educated – have

these “miserly” tendencies to accept misinformation at face value might

help us to stop the spread of misinformation.

Given the work on truthiness – the idea that we “nod along when thoughts flow smoothly” – organisations attempting to debunk a myth should avoid being overly complex.

Instead,

they should present the facts as simply as possible – preferably with

aids like images and graphs that make the ideas easier to visualise. As

Stanley puts it: “We need more communications and strategy work to

target those folks who are not as willing to be reflective and

deliberative.” It’s simply not good enough to present a sound argument

and hope that it sticks.

If they can, these campaigns should avoid repeating the myths

themselves. The repetition makes the idea feel more familiar, which

could increase perceptions of truthiness. That’s not always possible, of

course. But campaigns can at least try to make the true facts more

prominent and more memorable than the myths, so they are more likely to

stick in people’s minds. (It is for this reason that I’ve given as

little information as possible about the hoax theories in this article.)

When it comes to our own online behaviour, we might try to disengage

from the emotion of the content and think a bit more about its factual

basis before passing it on. Is it based on hearsay or hard scientific

evidence? Can you trace it back to the original source? How does it

compare to the existing data? And is the author relying on the common logical fallacies to make their case?

One thing we can do is simply think about a post’s factual basis before we pass it on (Credit: Getty Images)

These are the questions that we should be asking – rather than

whether or not the post is going to start amassing likes, or whether it

“could” be helpful to others. And there is some evidence that we can all get better at this kind of thinking with practice.

Pennycook suggests that social media networks could nudge their users

to be more discerning with relatively straightforward interventions. In

his experiments, he found that asking participants to rate the factual

accuracy of a single claim primed participants to start thinking more

critically about other statements, so that they were more than twice as

discerning about the information they shared.

In practice, it might be as simple as a social media platform

providing the occasional automated reminder to think twice before

sharing, though careful testing could help the companies to find the

most reliable strategy, he says.

There is no panacea. Like our attempts to contain the virus itself,

we are going to need a multi-pronged approach to fight the dissemination

of dangerous and potentially life-threatening misinformation.

And as the crisis deepens, it will be everyone’s responsibility to stem that spread.

–

David Robson is the author of The Intelligence Trap, which examines why smart people act foolishly and the ways we can all make wiser decisions. He is @d_a_robson on Twitter.

As an award-winning science site, BBC Future is committed to

bringing you evidence-based analysis and myth-busting stories around the

new coronavirus. You can read more of our Covid-19 coverage here.

–

Join one million Future fans by liking us on Facebook, or follow us on Twitter or Instagram.

If you liked this story, sign up for the weekly bbc.com features newsletter,

called “The Essential List”. A handpicked selection of stories from BBC

Future, Culture, Worklife, and Travel, delivered to your inbox every

Friday.

media is rife with posts disparaging the vaccine hesitant – but these

reactions to a complex and nuanced issue are doing more harm than good.

There should be no doubt about it: Covid-19 vaccines are saving lives.

Consider some recent statistics from the UK. In a study tracking more than 200,000 people, nearly every single participant had developed antibodies against the virus

within two weeks of their second dose. And despite initial worries that

the current vaccines may be less effective against the Delta variant,

analyses suggest that both the AstraZeneca and the Pfizer-BioNTech jabs reduce hospitalisation rates by 92-96%. As many health practitioners have repeated, the risks of severe side effects from a vaccine are tiny in comparison to the risk of the disease itself.

Yet a sizeable number of people are still reluctant to get the shots.

According to a recent report by the International Monetary Fund, that ranges from around 10-20% of people in the UK to around 50% in Japan and 60% in France.

The result is becoming something of a culture war on social media,

with many online commentators claiming that the vaccine hesitant are

simply ignorant or selfish. But psychologists who specialise in medical

decision-making argue these choices are often the result of many

complicating factors that need to be addressed sensitively, if we are to

have any hope of reaching population-level immunity.

The 5Cs

First, some distinctions. While it is tempting to assume that anyone

who refuses a vaccine holds the same beliefs, the fears of most vaccine

hesitant people should not be confused with the bizarre theories of staunch anti-vaxxers.

“They’re very vocal, and they have a strong presence offline and

online,” says Mohammad Razai at the Population Health Research

Institute, St George’s, University of London, who has written about the various psychological and social factors that can influence people’s decision-making around vaccines. “But they’re a very small minority.”

You might also like:

- Solving the puzzle of long Covid

- Can vaccinated people spread Covid-19?

- How Covid-19 will evolve in the future

The vast majority of vaccine-hesitant people do not have a political agenda and are not committed to an anti-scientific cause: they are simply undecided about their choice to take the injection.

The good news is that many people who were initially hesitant are changing their mind.

“But even a delay is considered a threat to health because viral

infections spread very quickly,” says Razai. This would have been

problematic if we were still dealing with the older variants of the

virus, but the higher transmissibility of the new Delta variant has

increased the urgency of reaching as many people as quickly as possible.

vaccine, research suggests (Credit: Jasmine Merdan/Getty Images)

Fortunately,

scientists began studying vaccine hesitancy long before Sars-Cov-2 was

first identified in Wuhan in December 2019, and they have explored

various models which attempt to capture the differences in people’s

health behaviour. One of the most promising is known as the 5Cs model,

which considers the following psychological factors:

Confidence: the person’s trust in the

vaccines efficacy and safety, the health services offering them, and the

policy makers deciding on their rollout

Complacency: whether or not the person considers the disease itself to be a serious risk to their health

Calculation: the individual’s engagement in extensive information searching to weigh up the costs and benefits

Constraints (or convenience): how easy it is for the person in question to access the vaccine

Collective responsibility: the willingness to protect others from infection, through one’s own vaccination

In 2018, Cornelia Betsch at the University of Erfurt in Germany and colleagues asked participants to rate a series of statements that measured each of the 5Cs,

and then compared the results with their actual uptake of relevant

procedures, such as the influenza or the HPV vaccine. Sure enough, they

found that the 5Cs could explain a large amount of the variation in

people’s decisions, and consistently outperformed many other potential

predictors – such as questionnaires that focused more exclusively on

issues of trust without considering the other factors.

It is useful to examine the various cognitive biases that are known to sway our perceptions

In

currently unpublished research, Betsch recently used the model to

predict people’s uptake of the Covid-19 vaccines, and her results so far

suggest that the 5Cs model can explain the majority of the variation in

people’s decisions.

There will be other contributing factors, of course. A recent study from the University of Oxford suggests that a fear of needles is a major barrier for around 10% of the population. But the 5Cs approach certainly seems to capture the most common reasons for vaccine hesitancy.

Confirmation bias

When considering these different factors and the ways they may be

influencing people’s behaviour, it is also useful to examine the various

cognitive biases that are known to sway our perceptions.

Consider the first two Cs – the confidence in the vaccine, the complacency about the dangers of disease itself.

anti-scientific views like the small minority of anti-vaccine protesters

(Credit: Tolga Akmen/AFP/Getty Images)

Jessica

Saleska at the University of California, Los Angeles points out that

humans have two seemingly contradictory tendencies – a “negativity bias”

and an “optimism bias” that can each skew people’s appraisals of the risks and benefits.

The negativity bias concerns the way you appraise events beyond your

control. “When you’re presented with negative information, that tends to

stick in your mind,” says Saleska. The optimism bias, in contrast,

concerns your beliefs about yourself – whether you think you are fitter

and healthier than the average person. These biases may work

independently, meaning that you may focus on the dangerous side effects

of the vaccines while simultaneously believing that you are less likely

to suffer from the disease, a combination that would reduce confidence

and increase complacency.

It is easy to dismiss someone else’s decisions if you don’t understand the challenges they face in their day-to-day lives

Then

there’s the famous confirmation bias, which can also twist people’s

perceptions of the risks of the virus through the ready availability of

misinformation from dubious sources that exaggerate the risks of the

vaccines. This reliance on misleading resources means that people who

score highly on the “calculation” measure of the 5Cs scale – the people

who actively look for data – are often more vaccine hesitant than people

who score lower. “If you already think the vaccination could be risky,

then you type in ‘is this vaccination dangerous?’, then all you are

going find is the information that confirms your prior view,” says

Betsch.

Remember that these psychological tendencies are extremely common.

Even if you have accepted the vaccine, they have probably influenced

your own decision making in many areas of life. To ignore them, and to

assume that the vaccine hesitant are somehow wilfully ignorant, is

itself a foolish stance.

Health

authorities need to produce simple, easy to understand information

which shows the vaccine is safe (Credit: Tang Ming Tung/Getty Images)

Nor

should we forget the many social factors that might influence people’s

uptake – the “constraints/convenience” factor in the 5Cs. Quite simply,

the perception that a vaccine is difficult to access will only

discourage people who are already sitting on the fence. When we spoke,

Betsch suggested that this might have slowed the uptake in Germany,

which has a very complicated system to identify who is eligible to

receive the vaccine at any one time. People would respond much more

quickly, she says, if they received automatic notifications.

Razai agrees that we need to consider the question of convenience,

particularly for those in poorer communities who may struggle with the

time and expense of the journey to a vaccination centre. “Travelling to

and from that may be a huge issue for most people who are on minimum

wage or unemployment benefits,” he says. That’s why it’s often best for

the vaccines to be administered in local community centres. “I think

there has been anecdotal evidence of it being more successful in places

of worship, mosque, gurdwaras, and churches.”

Finally, we need to be aware of the context of people’s decisions, he

says – such as the structural racism that might had led certain ethnic

groups to have lower overall trust in medical authorities. It is easy to

dismiss someone else’s decisions if you don’t understand the challenges

they face in their day-to-day lives.

Opening a dialogue

So what can be done?

There is no easy solution, but health authorities can continue to

provide easy-to-digest, accurate information address the major concerns.

According to a recent report by Imperial College London’s Institute of Global Health Innovation (IGHI),

the major barriers continue to be patient’s concerns about the side

effects and the fears that the vaccines haven’t been adequately tested.

I

would urge governments to stop thinking they can reach the mass of

niches out there with one mass-market vaccine message – Sarah Jones

For the former, graphics showing the relative risks of the vaccines, compared to the actual disease,

can provide some context. For the latter, Razai suggests that we need

more education about the history of the vaccines’ development. The use

of mRNA in vaccines has been studied for decades, for instance – with

long trials testing its safety. This meant the technique could be quickly adapted for the pandemic.

“None of the technology that has been used would be in any way harmful

because we have used these technologies in other areas in healthcare and

research,” Razai says.

Sarah Jones, a doctoral researcher who co-led the IGHI report,

suggests a targeted approach will be necessary. “I would urge

governments to stop thinking they can reach the mass of niches out there

with one mass-market vaccine message, and work more creatively with

many effective communications partners,” she says. That might involve

closer collaborations with the influencer role models within each

community, she says, who can provide “consistent and accurate

information” about the vaccines’ risks and benefits.

Making

vaccine centres easy for locals to get too - like this one in India -

makes them more likely to be used (Credit: Sunil Ghosh/Hindustan

Times/Getty Images)

However

they choose to deliver the information, health services need to make it

clear that they are engaging in an open dialogue, Razai says – rather

than simply dismissing them out of hand. “We have to listen to people’s

concerns, acknowledge them, and give them information so they can make

an informed decision.”

Saleska agrees that it’s essential to engage in a two-way

conversation – and that’s something that we could all learn as we

discuss these issues with our friends and family. “Being respectful and

recognising their concerns – I think that could actually be more

important than just spitting out the facts or statistics,” she says. “A

lot of the time, it’s more about the personal connection than it is

about the actual information that you provide.”

* David Robson is the

author of The Intelligence Trap: Why Smart People Do Dumb Things. His

next book is The Expectation Effect: Transform Your Health, Fitness, Productivity, Happiness and Ageing, to be published in early 2022. He is @d_a_robson on Twitter.

–

Join one million Future fans by liking us on Facebook, or follow us on Twitter or Instagram.

If you liked this story, sign up for the weekly bbc.com features newsletter,

called “The Essential List”. A handpicked selection of stories from BBC

Future, Culture, Worklife, and Travel, delivered to your inbox every

Friday.

Pepper

Pepper  Cucumber

Cucumber  Carrots

Carrots  Beans in Pots. Fruit

Beans in Pots. Fruit  Bearing Trees

Bearing Trees  all over the world

all over the world  and in Space.

and in Space.  Happy

Happy  Life to Attain Eternal Bliss as Final Goal.- Universal Prabuddha Intellectuals Convention.

Life to Attain Eternal Bliss as Final Goal.- Universal Prabuddha Intellectuals Convention.